Recent News

- Developing Organic Thin-Film Transistors into Biosensors February 17, 2025

The Department of Electronics and Communication Engineering is proud to announce that Dr Durga Prakash M and his scholar Prasanthi Ms Prasanthi Lingala have their invention titled “An Organic Thin-Film Transistors (OTFTs) with Steep Subthreshold and Ultra-Low Temperature Solution Processing for Label-Free Biosensing” published in the Indian Patent Office Journal with the Application Number: 202541000088. Their research focus on developing an Organic Thin-Film Transistor (OTFT) that is able to work as a biosensor in detecting diseases or for real-time health monitoring.

Abstract

Organic Thin-Film Transistor (OTFT): The name “organic thin-film transistor” (OTFT) refers to a type of transistor that employs organic semiconductor materials in its active layer rather than the more traditional inorganic materials such as silicon. Optical thin-film transistors (OTFTs) are distinguished by their adaptability, low fabrication cost, and optimal applicability for electronic devices that are lightweight and portable. Considering their high sensitivity to changes in the surrounding environment and their compatibility with functionalised layers for the detection of biomolecules, these transistors find widespread application in the field of biosensors.

Explanation of the Research in Layperson’s Terms

Imagine a flexible electronic switch that can be bent, stretched, and used in lightweight devices—this is what an Organic Thin-Film Transistor (OTFT) does! Unlike traditional transistors made from rigid silicon, OTFTs use special organic materials, making them more adaptable for wearable sensors, flexible displays, and medical devices.

The research focuses on how these transistors can be used as biosensors, meaning they can detect tiny changes in the environment, like the presence of certain chemicals or biomolecules. This is important for medical testing, where OTFTs could help develop low-cost, highly sensitive diagnostic tools—imagine a simple patch that can detect diseases from sweat or a flexible sensor for real-time health monitoring! By improving how OTFTs interact with biological substances, the team aims to make them more accurate, efficient, and reliable for next-generation healthcare and wearable technology.

Continue reading →

Fig.: Schematic structure of DNTT based OTFT

- A Novel System for Breast Cancer Diagnosis January 29, 2025

Faculty duo from the Department of Electronics and Communication Engineering, Dr Anirban Ghosh and Dr Sunil Chinnadurai, along with their research cohort, Phanindra Rayapudi Venkata, Baswala Srujana, Gadde Saranya, and Abburi Sowgandhi (B.Tech. ECE students) have published their patent titled “A System and Method for Breast Cancer Diagnosis” (Application number: 202441088356). Their cutting-edge research presents a system to help diagnose breast cancer more accurately and efficiently using advanced image analysis techniques.

Abstract

The present disclosure discloses a system for diagnosing breast cancer that utilizes topological data analysis to transform mammogram images into meaningful diagnostic insights. It includes a data preprocessing module for image standardization and enhancement and a feature extraction module to create histograms for topological analysis. The topological data analysis module converts these histograms into Persistent Homology Diagrams (PHDs) representing topological features. An Earth Mover’s Distance (EMD) matrix is generated by a similarity metric module to compare PHDs. Representative PHDs are identified using a representative selection module, enabling accurate classification by the classification module. The system’s performance is assessed through various metrics by a performance analysis module, and a web service module provides an intuitive interface for users to upload images and receive diagnostic results. This approach enhances breast cancer detection by focusing on persistent topological features, offering improved precision and interpretability.

Figure 1. Conversion of mammograms into PHDs

Explanation of the Research in Layperson’s Terms

Here’s how the system works in simple terms:

1. Preparing the Images: Mammogram images are cleaned and adjusted to ensure they’re clear and easy to analyse. The focus is on areas that might show signs of cancer.

2. Extracting Patterns: The system looks for patterns in the images that could indicate healthy or unhealthy tissue. It turns these patterns into a visual map that represents the shape and structure of the tissue.

3. Analysing Shapes: The system uses math to study how these shapes appear and disappear as the image details change. The most persistent shapes (important ones) are kept, and random noise is ignored.

4. Comparing Images: A tool measures how similar or different these patterns are between images. This helps the system group them into healthy or cancerous categories.

5. Making a Decision: The system compares a new mammogram to its library of known patterns to decide whether it’s likely healthy or shows signs of cancer.

6. Easy to Use: Doctors can upload an image to a web-based tool and quickly get results, complete with visual explanations.

This system helps doctors by making the diagnosis process faster, more reliable, and easier to understand, which can lead to earlier and better treatment for breast cancer.

Practical Implementation/Social Implications of the Research

This research enhances breast cancer detection by enabling earlier, more accurate diagnoses and improving survival rates. Its web-based tool ensures access to advanced diagnostics in remote and underserved areas, reducing disparities in healthcare. Supporting radiologists with objective insights minimizes errors and workload, especially in resource-limited settings. Patients benefit from faster, clearer results, leading to timely and cost-effective treatment. Additionally, the innovative methods could inspire advancements in diagnosing other diseases, driving broader medical progress and improving global health outcomes.

Future Research Plans

Future research could expand this system to detect other diseases like lung or liver cancer, improve diagnostic accuracy by reducing false results, and integrate multimodal data for comprehensive analysis. Incorporating patient-specific information for personalized risk assessments, creating self-learning models, and optimizing computational efficiency could enhance its adaptability. Large-scale global trials and user-friendly interfaces would ensure effective implementation across diverse populations and healthcare systems, making the technology more versatile, accessible, and impactful.

Continue reading → - Smart Solutions for Road Safety December 26, 2024

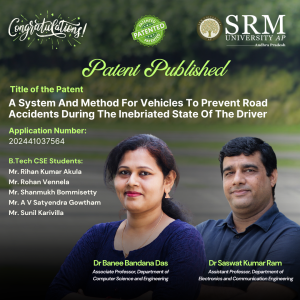

Dr Saswat Kumar Ram and Dr Banee Bandana Das from the Department of Electronics and Communication Engineering have developed an intelligent safety system to prevent road accidents caused by drowsiness and alcohol consumption. Their patented research, A System and Method for Vehicles to Prevent Road Accidents During the Inebriated State of the Driver (Indian Patent No. 202441037564), uses simple sensors and microcontrollers to provide real-time safety feedback, offering an affordable and effective solution for enhancing vehicle safety.

Dr Saswat Kumar Ram and Dr Banee Bandana Das from the Department of Electronics and Communication Engineering have developed an intelligent safety system to prevent road accidents caused by drowsiness and alcohol consumption. Their patented research, A System and Method for Vehicles to Prevent Road Accidents During the Inebriated State of the Driver (Indian Patent No. 202441037564), uses simple sensors and microcontrollers to provide real-time safety feedback, offering an affordable and effective solution for enhancing vehicle safety.A Brief Abstract:

This research offers the best solution to improve security measures in the control system by integrating simple sleep and odour sensors. The goal is to create an intelligent safety management system. The devices used are microcontroller, motor drivers, motors, MQ-3 (alcohol sensor), IR sensor (drowsiness detection), buzzer, and lights, these are the main components used in this project for hardware. The embedded-C language is used for the Arduino IDE. The system is designed to provide safety feedback in a variety of applications. MQ-3 can be used to detect and identify environmental odours in people, whether drunken. Our aim is to design product that can be inexpensive and easy to integrate into existing control systems, making it suitable for many applications such as automobiles, workplaces, and homes. This research is a good step towards improving the security of the control system by using the ability of simple and inexpensive equipment for instant monitoring and response.

Explanation in Layperson’s Terms:

The proposed safety control system effectively detects drowsiness and alcohol consumption, utilizing simple sensors MQ-3 and IR sensor to enhance road safety. By integrating these sensors with Microcontroller, the system triggers real-time alerts to prevent accidents. Upon detecting alcohol, the car automatically slows down, minimizing speed and activating LED backlights to indicate the driver’s inebriated state. Similarly, when drowsiness is detected, the system reduces car speed, emits a buzzing sound, and activates lights, signaling the driver’s drowsy condition. This comprehensive approach addresses both drowsiness and alcohol consumption, two major contributors to road accidents. The system’s simplicity, affordability, and ease of integration make it a promising solution for enhancing vehicle safety.

Practical Implementation

The current invention addresses the generic problems that cause road accidents and tries to control the speed of the vehicle and play buzzer to ensure the safety of the passengers and driver. To best of authors’ knowledge, th parameter detection i.e. alcohol and drowsiness detection.

The present invention can be used in smart city and smart villages applications for making the road safe, driver and passengers safe, and few application areas are:

- Smart City and Smart Village: This technique and system can reduce road accidents caused due to alcohol consumption and drowsiness.

- Automobile Industry: The system can be easily integrated with the existing control system in vehicles to ensure safety.

Future Research Plans:

To develop more secure and reliable safety mechanism for safe driving.

Continue reading → - Research on Vehicle Density Detection Granted a Patent December 3, 2024

Research Scholars Chetan Mylapilli, Jethin Sai Chilukuri, Rohith Kumar Akula, Sana Fathima, and Assistant Professor Dr Anirban Ghosh from the Department of Electronics and Communication Engineering at SRM University-AP have co-authored an innovative paper titled “A System and Method for Detecting Density-Based Intelligent Parallel Traffic.” This pioneering research delves into the development of an intelligent traffic control system that dynamically adjusts traffic signals based on real-time vehicle density analysis, their research with the patent no- 202241044904 represents a significant step in integrating technology with transportation efficiency.

Research Scholars Chetan Mylapilli, Jethin Sai Chilukuri, Rohith Kumar Akula, Sana Fathima, and Assistant Professor Dr Anirban Ghosh from the Department of Electronics and Communication Engineering at SRM University-AP have co-authored an innovative paper titled “A System and Method for Detecting Density-Based Intelligent Parallel Traffic.” This pioneering research delves into the development of an intelligent traffic control system that dynamically adjusts traffic signals based on real-time vehicle density analysis, their research with the patent no- 202241044904 represents a significant step in integrating technology with transportation efficiency.Abstract

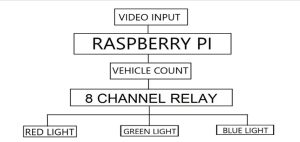

This work presents an intelligent traffic control system that addresses the gaps in the current state-of-the-art by using a novel hardware-software integration. The system evaluates traffic density in each lane direction and dynamically adjusts traffic lights using a computational algorithm to significantly reduce waiting times at junctions. It also ensures safe pedestrian movement and enables parallel traffic flows. A Raspberry Pi serves as the system’s control unit, utilizing video processing to determine traffic density, while LEDs simulate the traffic lights. The system integrates various hardware and software components, including Raspberry Pi, LEDs, relay modules, VNC software, and sample traffic videos, to provide an efficient solution to the traffic management problem.

Explanation of Research in Layperson’s Terms

The current system uses a Raspberry Pi to control traffic lights based on real-time video of cars at intersections. It detects how many vehicles are in each lane and adjusts the lights to reduce waiting time. Pedestrian safety is managed by ensuring safe crossing times. LED lights simulate the traffic signals, and the system allows smoother traffic flow by handling vehicles moving in parallel. However, it can’t yet control turning vehicles or prioritize emergency vehicles.

Practical Implementation of the Research

The intelligent traffic control system significantly reduces congestion by dynamically adjusting traffic signals, leading to shorter wait times and smoother commutes. It helps lower pollution and fuel consumption by minimizing idle time at junctions, contributing to better air quality and conservation of resources. Pedestrian safety is improved through designated crossing times, reducing accidents. The system also supports economic growth by cutting time wasted in traffic, enhancing productivity.

Future Research Plans

Future research will focus on adding control for turning traffic and distinguishing between different vehicle types to enable emergency vehicle priority. To improve real-time video processing, the system will transition from Raspberry Pi to more efficient hardware like FPGAs or GPUs. Machine learning will be explored for better vehicle detection and traffic signal optimization. Integration with V2X communication will enhance traffic management, and real-world scalability will be tested for deployment in smart city environments.

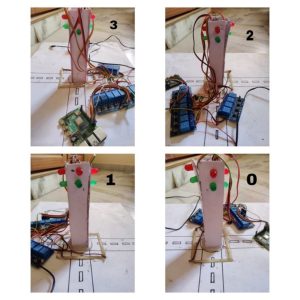

The prototype:

Figure 1. Image of the prototype

Figure 2. Working prototype

Figure 3. Block Diagram of the prototype

Continue reading → - Patent Published on Segmentation of Kidney Abnormalities November 29, 2024

Prompt and timely disease detection forms an essential part of any treatment, Dr Pradyut Kumar Sanki, Dr Swagata Samanta, and research scholar Ms Pushpavathi Kothapalli from the Department of Electronics and Communication Engineering have worked towards a timely and accurate disease detection when it comes to kidney disease diagnosis through medical images. Their innovative research titled, “A System and a Method for Automated Segmentation of Kidney Abnormalities in Medical Images” has been published in the patent journal with Application No. 202441074765 and has significant potential for clinical adoption, improving patient care in kidney disease detection and treatment.

Prompt and timely disease detection forms an essential part of any treatment, Dr Pradyut Kumar Sanki, Dr Swagata Samanta, and research scholar Ms Pushpavathi Kothapalli from the Department of Electronics and Communication Engineering have worked towards a timely and accurate disease detection when it comes to kidney disease diagnosis through medical images. Their innovative research titled, “A System and a Method for Automated Segmentation of Kidney Abnormalities in Medical Images” has been published in the patent journal with Application No. 202441074765 and has significant potential for clinical adoption, improving patient care in kidney disease detection and treatment.Abstract:

This research work aimed to develop an effective method for segmenting kidney diseases, including kidney stones, cysts, and tumours. The method achieved high accuracy in segmenting kidney diseases, with a good mean precision, and recall. The study employed techniques to efficiently select the most relevant features for kidney disease segmentation, identifying key features related to imaging and patient health. The method outperformed other approaches in terms of accuracy, precision, and recall. The study utilized a comprehensive dataset of kidney disease patients to train and test the segmentation method effectively. The results suggest that this method has the potential to be widely adopted in clinical settings, contributing to more accurate and efficient diagnostic tools for kidney disease segmentation and improving patient care in an effective manner.

Practical Implementation:

The practical implementation of the research involves deploying a system for real-time segmentation of kidney diseases, including kidney stones, cysts, and tumours. The method achieved high accuracy in segmenting kidney diseases using deep learning techniques. The model can quickly identify and delineate diseased areas within the kidney. The study employed techniques to select the most relevant features for kidney disease segmentation, focusing on key imaging and health-related characteristics. The method outperformed other approaches in terms of accuracy, precision, and recall. The study utilized a comprehensive dataset of kidney disease patients to train and test the segmentation method. The results suggest that the method has the potential to be widely adopted in clinical settings, contributing to more accurate and efficient diagnostic tools for kidney disease segmentation and improving patient care.

Future Research Plans:

The future plans for the work on chronic kidney disease (CKD) detection and segmentation involve several key areas:

Expanding Disease Coverage: Future research could involve adapting and expanding the segmentation model to detect and segment other kidney-related abnormalities and diseases, such as renal infections or congenital disorders, thereby increasing the versatility and applicability of the tool.

Improving Model Accuracy and Robustness: To further improve accuracy, additional deep learning techniques, such as ensemble learning or advanced attention mechanisms, could be explored. Testing on larger and more diverse datasets could help make the model more robust and generalizable across various patient demographics and imaging devices.

Integration with Multi-modal Data: Incorporating other data types, such as blood test results, genetic markers, or electronic health records, could be an exciting avenue to explore. This would create a multi-modal approach, combining imaging data with clinical information, potentially improving diagnostic accuracy and providing more comprehensive insights into kidney health.

Real-world Clinical Trials: Conducting clinical trials in real-world settings to validate the effectiveness of the segmentation tool with healthcare professionals. Gathering feedback from these trials would provide valuable insights into user experience and model performance, facilitating further refinement.

Developing a User-friendly Interface: Future work could involve creating an easy-to-use interface that seamlessly integrates with hospital systems. This interface would allow healthcare providers to interact with the segmentation results, adjust parameters, and view comprehensive diagnostic reports.

Exploring Semi-supervised and Unsupervised Learning Approaches: To reduce the reliance on labeled data, which can be time-consuming to obtain, exploring semi-supervised or unsupervised learning techniques could be beneficial. These approaches might help in training the model on large datasets without extensive labeling, thereby improving scalability.

Longitudinal Studies for Prognostic Analysis: Research could also focus on tracking patients over time to understand how kidney disease progresses and how segmentation results correlate with long-term health outcomes. This could help in creating predictive models for disease prognosis.

Continue reading →